If you are currently working on a legacy project and you want to make your life easier, then this article is for you. Recently, I started working on a high-traffic/high-profile fashion website. Due to a time-sensitive warehousing relocation, the websites order management system had to completely change by a fixed date. As a result, getting something working by a certain date was the focus. Like a lot of things that are done in a rush, the codebase and the release process suffered. The team hit all the business objectives and now has some time to focus on improving things. This raised two the question, firstly, how should the team tackle these improvements and secondly, how can we create some metrics around these changes so we can track progress. In essence, how can we tell if the team is making good meaningful progress rather than just doing busywork?

In a stroke of fortuitous timing, the folks over at NDepends got in contact with me on the exact same day the team started thinking about how to refactor the codebase. For those of you who have never looked at NDepends before, it's a tool that integrates inside of Visual Studio, that claims to help with things like:

- Smart Technical Debt Estimation live in VS

- Rules and code analysis through LINQ queries

- CI reporting

If you are interested in learning how my experiment with NDepends went and if you can use it to make your life easier, then read on.

How Bad Is The Codebase?!?!?

When I start working with a new client for the first time one of the first things I do is to figure out how bad things are. It's all very good, having the team say 'the codebase is crap', however, if you can't be specific about how bad things are, what really needs fixing, and how long it will take then it will be very hard for a product owner to sign off the time required to make things better. My personal opinion is that I think all companies should set aside a chunk of time to fix code smells in every sprint. I also think I deserve to win the lottery, however, in reality, you need to be realistic and sometimes this is not possible. If you want to improve your codebase, you will very likely have to sell the concept to someone, in order for them to sign off the time.

Previously, this has been pretty difficult. I've used tools like SonarQube to determine how 'good' the codebase was, however, this involved setting up a continuous integration pipeline which took months of effort. The feature I was most optimistic about using within NDepends was its ability to generate a code quality report directly within Visual Studio with a few clicks of a button.

Getting Started With NDepends

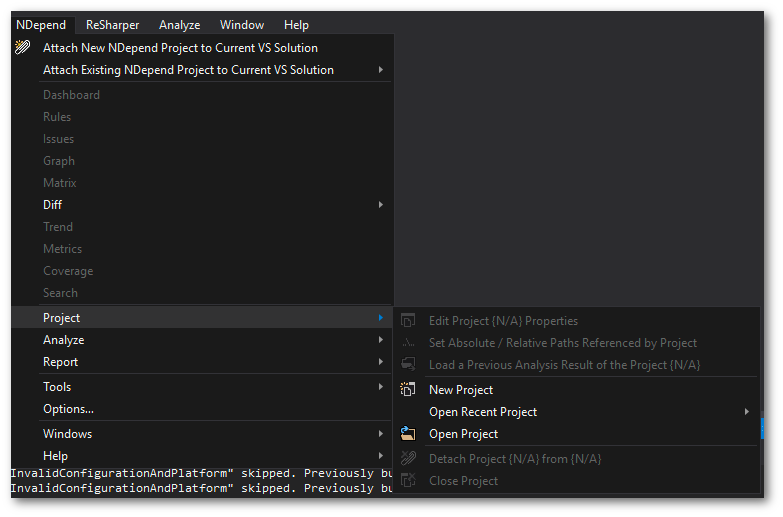

Installing NDepends is idiot-proof.. you download an installer, run it, click a few buttons and it's installed. After NDepends is installed locally, within Visual Studio you will see an NDependsmenu option. Before you can generate a NDepends code quality report, you will need to create a new NDepends project. To do this within Visual Studio, open up the solution you want to generate the report from and within the NDepends menu, select:

Project ➡ New Project

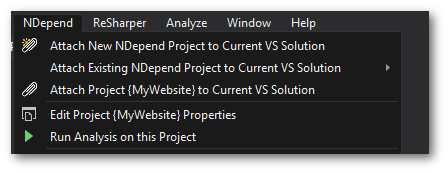

The NDepends project folder is where all the NDepends config settings relevant to your solution will be stored. It does not affect your end website and it will not be released into your production environment, so you can name it whatever you want. After you have created a NDepends project, you can then select the Run Analysis on this project:

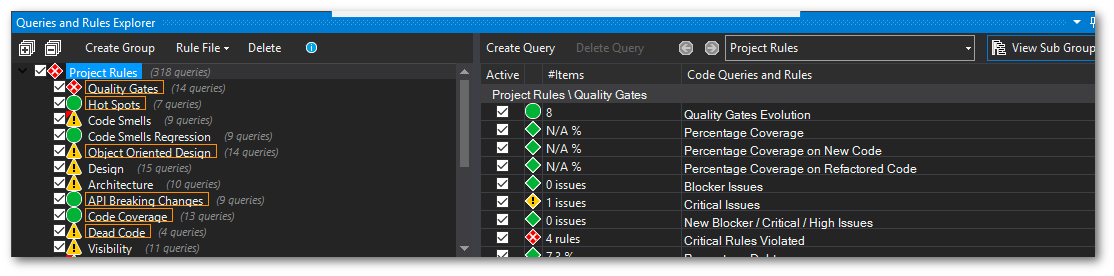

It's that simple. The report gets generated as a standalone HTML website, so you can email it to the team. You can also see the report in Visual Studio. I wasn't really to sure what to expect from the report at first, however, it contained loads of useful insights about our codebase, including:

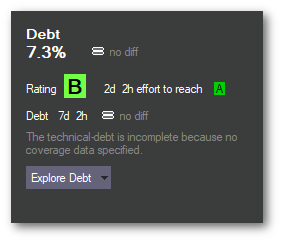

Total % of debt in the codebase

The estimated time it would take someone to fix all the code smells

A huge list of the NDepends rules, and which classes break those rules.

The one really nice thing about the NDepend report is that it's created within Visual Studio. When you click on a violation outlined in the report, it opens up the file and places the cursor on the exact line of code that is failing the rule. This makes fixing things a lot easier compared to using a report generated in the Ci/Cd pipeline. You can start at the top of the list and then work your way down.

- The total estimation time to fix all the issues.

This total estimation time to fixing all debt was surprisingly one of the most useful metrics. Historically in this team, when people had tried to get some time set aside to improve things their requests were denied. The business didn't reject the requests because they didn't want to improve things. Requests were rejected because no one could confidently say how long they needed to fix things and what would get fixed. Armed with the NDependes violations, the estimated time to fix we had a way of communicating before developers and the business to get time prioritised into refactoring smells. Armed with a timescale and a list of things that would get fixed, the request was approved! Without this report, it would have been a lot more difficult to get the time allocated. It was great!

Was NDepends Useful?

Longtime readers of this site will know that I'm into good development practices and solid software engineering processes. I don't like working in teams where the quality gates are manual. I like tools that can take subjective issues and turn them into binary decisions. For this project, NDepends definitely helped increase code quality and its report generated much-needed buy-in from the business to spend dev time fixing things.

Before I installed NDepends, I had a preconceived notion that there would be a steep learning curve before I would be able to get benefits from using it. This bias even put me off trying to use it for a few weeks. Figuring out how to generate a report is simple. Within about 10 minutes of installing it, I had a report that gave me a much clearer picture of where the biggest areas in the project contained smells and which classes we should prioritise.

The best thing I like about the NDepends report is that it's all within Visual Studio, rather than something like SonarQube. I can generate a report in my dev station and then click through the 'Queries and rules'' explorer and fix things quickly. After a few weeks of refactoring the report output with no errors, 😊😊😊

NDepends made it really simple to start enforcing stricter coding standard around the team. It allowed us to introduce more binary standards into the code review process. If you have ever worked in a large team that all review code, one challenge is always around getting a consistent standard. More senior people on the team will give harder and more in-depth feedback than junior people. As code reviews can be very subjective and open to individual preferences getting agreement as to 'good' code can be open to debate. When the process was turned into a binary, does your commit add extra code smells as defined by NDepends, yes/no life became a lot simpler? If you have never tried using NDepend, I recommend you give the free demo a try and see if it works for you. Happy Coding 🤘