In this tutorial, you will learn about 10 common issues that will slow down your page loading speed within an Episerver powered website. Website performance is a critical step on every project. Bad performance will result in higher bounce rates, which can lead to higher complaints, lost revenue and damaged reputation.

There are countless articles that explain why speed matters. Google uses site speed as a rating factor in where your pages will appear in its search results. Another study found one in four people will leave your site if a page takes longer than four seconds to load.

I recommend that everyone building a new Episerver website, to block at least a weeks worth of effort within your project plan for the team to solely focus on performance before launch day. This raises the question, in this week, what should you test and where should you make your fixes? Below gives you 10 areas in your site that you should ensure works with greesey quick speed 🔥🔥🔥

Too Many HTTP Requests

Each image, script and CSS file that your web page relies on, will require an HTTP request to fetch it. The more requests your web page makes the longer it will take to load.

In order to make your web page load as quickly as possible, you will want to try and minimize the number of HTTP requests it makes. One way to check these request use the [GiftOfSpeeds HTTP Requests Checker]. After understanding what requests your page makes, there are a few common strategies to help reduce them, these include:

Combining Image Files: If your web page has a lot of images it will generate multiple server requests in order to load them. Sometime you can not change the images your page needs to render, in some instances images can be combined into an image sprite. An image sprite is a collection of images put into a single image. Using sprites will reduce the number of server requests and save bandwidth.

Enable Keep-Alive Connections: By default, most browsers will only be allowed to perform one HTTP request per connection. If you enable

KeepAliveconnections, the browser will be able to send multiple HTTP requests over the same TCP connection.Limit The Amount Of Social Buttons: SaaS tools like 'Share This' produce an HTTP request for each social site that you use on your site. Reduce the number you use, reduce the number of requests 😉

Combine All Javascript files: Using a bundling tool to combine all the sites JS into a single minified file will boost performance.

Not Using Gzip Compression

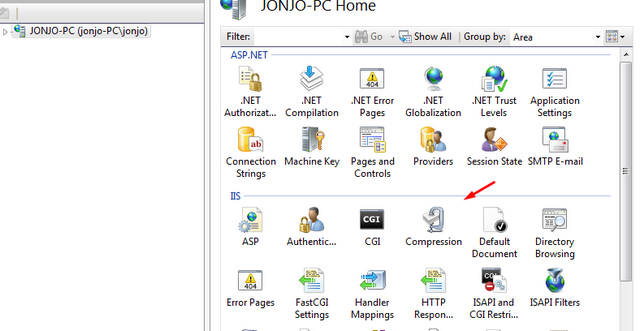

Based on the findings from this video not enabling Gzip may result in a browser needing to request 70% more data from your server! Enabling Gzip compression can be done within IIS. With GZip enabled, IIS will compress your website's files when they are being returned to the browser. Compressed files will reduce file size minimizing the time it takes to load a page. Adding Gzip is pretty easy. In your IIS site you should see a compression tab, click on it and enable it:

Too Many Redirects

Sometimes you will need to setup redirects within your site. Probably the most common reason why you need to set-up a redirect is when the location or the name of a web page changes. In these instances, setting up a 301 redirect will help reduce negative SEO impact.

When launching a brand new site a lot of page URLs may change on launch day. Creating 301 redirects for all the key pages can save your ass SEO wise, however, each redirect triggers an additional HTTP request-response cycle. If your site has to use re-directs, Google suggests you follow these recommendation

Redirects to pages that redirect to other pages

Do not use multiple redirects to more than source

Redirects from domains that don’t really serve content, such as redirects from misspelled versions of your domain

Use HTTP (server-side) redirects over JavaScript/meta (client-side) redirects whenever possible.

Another good tip is to think about caching. Neither a 301 nor a 302 response is cached by default. These requests can be cached using additional headers, such as Expires or Cache-Control.

404s

We all know what a 404 page not found error is. When a browser makes a request to an unknown page or asset, each web request will require an additional HTTP step. Every broken link on a page reduces performance and wastes some of your servers bandwidth. These broken requests give no value to your customers. At scale, these pointless requests, means that your server will not be able to process as much, so eliminating them from your site is important.

I personally like to use Xenu's Link Sleuth to find these broken links. Xenu is a free Windows desktop app. You point it at your domain and it will spider the entire site and create a report based on all the 404's it comes across. For an alternative online source you can also use W3C’s link checker W3C’s link checker. Run this report on your site and fix all the issues!

Enable Browser Caching

Before launching your site, you should update your web.config to enable browser caching on your static web files. All websites are composed of a certain amount of static components, such as images, stylesheets, scripts etc... that rarely change. Instead of having your visitors continually download these files from your server, you can set them to be cachable.

Browsers caching assets means less bandwidth is used on your server and the page load time will improve. If you are using IIS7 and you want to cache static content, add the following in your sites web.config:

Not Keeping the CMS Updated

You should aim to keep your Episerver instance patched to the latest version. Each new update usually has security and performance tweaks. Episerver follows a bi-weekly release cycle. By scheduleing time to update Episerver within your site frequently, you should get some of the performance benefits without having to do any custom development. I recommend you update your Epsierver via Nuget at least once evert two months.

Oversized Images

According to this reseach made by Radware, 45% of top 100 e-commerce sites do not compress their images. Over the years, I've seen content editors happily upload 10MB+ image being onto the homepage. This tends to happen in newer editors who lack training and understanding. Having such a large image on your pages will kill page speed so removing any of these large images from your site is important.

Historically, doing this in Episerver was easy. Yahoo used to provide a service called smush.it to compress images. Someone built an Episerver scheduled tasks that would automatically smush the images within your site at a regular time interval. Smush.it is no longer supported, however, you should implment something like this within your site!

Not Setting Image Dimensions

A good HTML practice is to specify image dimensions on all of the images in your website. By specifying the image dimension, the browser can layout the page while still downloading the image. This will result in a much better user experience, as it will minimise any potential render blocking and layout shifting. Remember, until the browser knows its dimensions, it will not be able to allocate a box for the image on the page 🤔

Put Scripts at the Bottom of the Page

When a browser requests a page from a server, the HTML is streamed to the browser. Render blocking can occur when the browser needs to wait for something to download before it can continue processing the rest of the page. If the browser encounters an external image, CSS or script tag it will wait for the file to download. You can speed things up by telling the browser to use asynchronous requests. By placing your JavaScript files scripts at the bottom of your page, or using the async modifier the browser will continue to load the page and not wait. Doing this will mean that your web page will seem to load quicker, as your web visitor will be able to see your page loading sooner.

In order to make sure your web pages load as quick as possible, I've covered the top 10 mistakes I've seen web developers make when building a website, in the hope you don't follow the same mistakes. Happy Coding 🤘